Anthropic has signed one of the largest compute deals in AI history—and almost none of it is going to Nvidia. The AI lab has committed to multiple gigawatts of next-generation TPU capacity from Google and Broadcom, in an agreement potentially worth hundreds of billions of dollars, starting 2027. It is a direct, large-scale endorsement of Google’s in-house silicon at a moment when Nvidia’s dominance of AI infrastructure is facing its most serious test yet.The deal gives Anthropic access to close to 5GW in new computing capacity over the coming years. Each gigawatt costs between $35bn and $50bn to build out, with the bulk of that spent on chips. That math points to a commitment running well into the hundreds of billions—and it’s going to Google’s TPUs, not Nvidia’s GPUs. Anthropic’s run-rate revenue has surged from $9 billion at the end of 2025 to over $30 billion today, with more than 1,000 business customers now spending over $1 million annually—making this one of the most consequential chip procurement decisions any AI company has made.

Google’s TPUs have found their most consequential customer yet

For most of the past decade, Google’s Tensor Processing Units were easy to dismiss. Fast, efficient, and useful for Google’s own AI work—but not a serious threat to a company that controls over 90% of the AI chip market. That case is getting harder to make.Anthropic has been running on Google TPUs since 2023. Last October, it announced a deal for more than a gigawatt of Google computing power—via as many as one million TPUs. The new agreement goes much further. Broadcom and Google have locked in a supply pact running through 2031, with Anthropic set to access around 3.5GW of that capacity.Broadcom’s role matters here. It takes Google’s TPU designs and turns them into chips ready for manufacturing—effectively converting Google’s internal silicon into a product other companies can use at scale. Morgan Stanley estimates that every 500,000 TPUs sold to external customers could generate as much as $13 billion in revenue for Google. Anthropic is the biggest external customer that ecosystem has seen.This isn’t the first time TPU momentum has rattled Nvidia. Late last year, reports emerged that Meta was in discussions to use Google TPUs in its data centers—and Nvidia’s stock dropped over 3% on the news alone. Nvidia responded with a post on X saying it was “a generation ahead of the industry” and “the only platform that runs every AI model.” Meta ultimately renewed its Nvidia relationship—committing to buy Nvidia’s next-generation Vera Rubin chips—but it also unveiled four new in-house MTIA chips, with a new generation shipping roughly every six months.Meta’s position is now straightforward: keep buying Nvidia chips, but build your own too. Anthropic is making a different call—committing to someone else’s chips, at a scale and timeline that is hard to walk back.Meanwhile, Anthropic is also quietly exploring designing its own chips, according to Reuters. No dedicated team exists yet and the plans are early-stage, but Meta, OpenAI, Amazon, and Google all started the same way before billions in custom silicon followed.

The inference era is where Nvidia’s moat gets tested

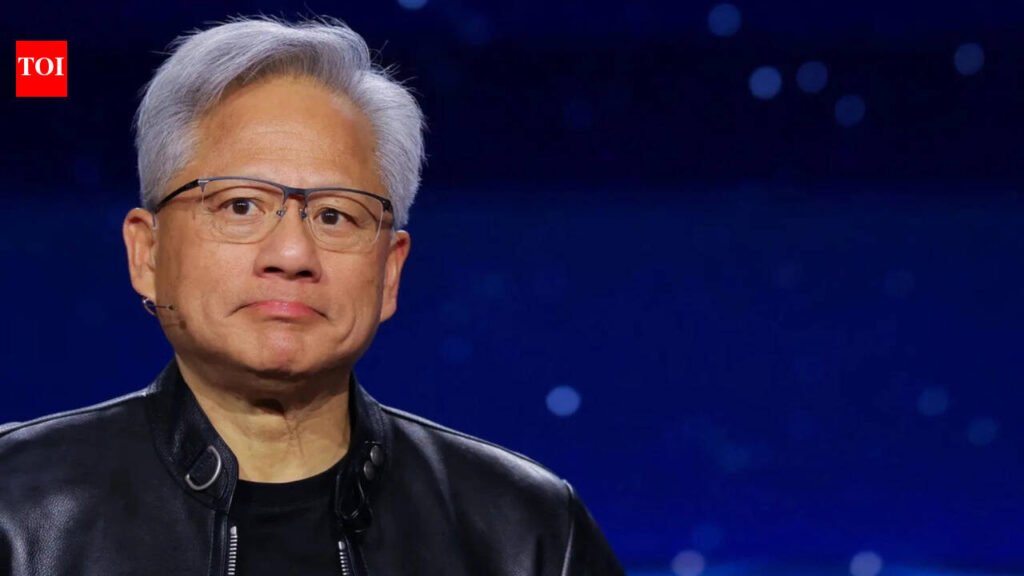

Nvidia built its dominance on AI model training—the phase where large models get built on massive clusters of GPUs. In that market, it remains largely unchallenged. But the industry has moved into its inference era, where models get used by hundreds of millions of people every day. Running AI at that kind of volume requires different economics, and cheaper chips win.Google’s latest Ironwood TPU reportedly delivers a total cost of ownership roughly 30–44% lower than Nvidia’s equivalent GB200 Blackwell server. Chips built for specific, repeated workloads are simply cheaper to run at scale than general-purpose GPUs designed to handle everything.Bank of America analysts estimate inference will account for 75% of AI data center spending by 2030, up from around 50% last year. Google, Amazon, Microsoft, and Broadcom are all building chips specifically for that market.Nvidia has responded. Jensen Huang recently unveiled a new inference-focused chip at GTC, built on technology from Groq—a chip startup Nvidia licensed for $17 billion in December. It marked a shift from Nvidia’s long-held position that its GPUs could handle both training and inference equally well.

Nvidia still leads, but the numbers are moving

Nvidia isn’t going anywhere. No one in the industry is looking to replace GPUs entirely right now. Anthropic itself still has compute commitments with Nvidia and Microsoft Azure alongside this Google deal. The $1 trillion revenue opportunity Huang outlined through 2027 is real.But every gigawatt Anthropic commits to Google TPUs is capacity that didn’t go to Nvidia. The Broadcom-Google partnership now has a long-term anchor customer, a supply pact through 2031, and a working commercial model. When Broadcom and Alphabet were the ones rallying on the day of this announcement, the market was already registering what it means.